Fortnightly Digest 4 March 2025

[Research] [Academia] Feb 15: FaceSwapGuard – Perturbation -Based Defence Against Face-Swapping.

This summary is of search conducted by Li Wang et al., titled "FaceSwapGuard: Safeguarding Facial Privacy from DeepFake Threats through Identity Obfuscation," released on February 15, 2025. The full paper is accessible at: https://arxiv.org/pdf/2502.10801

TLDR:

Li Wang and colleagues introduce FaceSwapGuard (FSG), a proactive defence mechanism designed to protect individuals from DeepFake face-swapping attacks. FSG employs imperceptible perturbations to a user's facial images, disrupting the identity features extracted by face-swapping algorithms. When these perturbed images are used in face-swapping attempts, the resulting images have identities significantly different from the original, thereby safeguarding the user's facial privacy.

How it Works:

Face-swapping techniques function by extracting key identity features from a source image and superimposing them onto a target face while maintaining the target’s pose, expression, and background. FSG intervenes in this process by subtly modifying the source image in a way that is imperceptible to human observers but disrupts the identity encoding process used by face-swapping algorithms.

FSG applies small, carefully crafted alterations to a user's facial image that interfere with identity feature extraction while remaining undetectable to the human eye.As a result of these perturbations, when a face-swapping model attempts to extract and apply identity features, it generates a misaligned identity that does not match the original subject. Unlike some adversarial defences that degrade image quality, FSG ensures that the perturbations do not introduce noticeable distortions, allowing users to share images online without aesthetic compromise.

Testing across multiple face-swapping models demonstrated that FSG significantly reduces the accuracy of identity reconstruction. In controlled experiments, the face match rate between protected and swapped images dropped from 90% to below 10%, making it highly effective against a range of state-of-the-art face-swapping techniques.

Implications:

FSG offers a practical solution for individuals seeking to mitigate the risks associated with unauthorised facial manipulation. By applying lightweight, image-based perturbations, it provides an accessible way for users to protect their identity while continuing to engage in digital spaces. This is particularly crucial for vulnerable individuals, such as children, who may unknowingly have their images exploited for CSAM. For example, whose parents and guardians often share images online without awareness of the risks, inadvertently providing material that could be manipulated.

Limitations:

The continued efficacy of FaceSwapGuard depends on the capabilities of adversarial face-swapping techniques on the market. Some models may eventually adapt to circumvent these perturbations, requiring continuous updates to FSG’s perturbation strategy and algorithms. The study primarily focuses on digital images, meaning its success in live video contexts or real-time applications remains untested. Future research should explore continue exploring defences that can be integrated across various media formats, including video and streaming content.

Mileva’s Note: Face-swapping is a highly accessible method for creating DeepFakes, with an abundance of high-quality, free online tools making it easier than ever for individuals with no technical expertise to generate realistic fake content. This has already been exploited in politically motivated disinformation campaigns, blackmail attempts and sextortion (recently targeted minors), identity theft schemes, and reputation-based attacks against high-profile individuals. The ability to introduce perturbations to protect against this is a major step in defending personal privacy and integrity. While this method does not solve the broader issue of DeepFake creation, it does provide individuals with a simple, image-preserving mechanism to reduce the likelihood of their photos being misused.

[Research] [Academia] Feb 17: AI Deployed in Critical Risk Simulations Cause Catastrophic Outcomes

This summary reviews research conducted by Rongwu Xu, Xiaojian Li, Shuo Chen, and Wei Xu, titled "'Nuclear Deployed!': Analyzing Catastrophic Risks in Decision-making of Autonomous LLM Agents," released on February 17, 2025. The full paper is accessible at: https://arxiv.org/pdf/2502.11355

TLDR:

Rongwu Xu and colleagues investigate the potential catastrophic risks associated with autonomous large language model (LLM) agents, particularly in high-stakes scenarios such as Chemical, Biological, Radiological, and Nuclear (CBRN) domains. Their study reveals that LLM agents can autonomously engage in harmful behaviours and deception without external prompting, and that enhanced reasoning abilities may increase these risks.

How it Works:

To assess the risk of deploying autonomous LLM agents, the researchers designed a three-stage evaluation framework involving scenario simulation, agentic rollouts, and risk assessment.

The first stage, Scenario Simulation, involved placing LLM agents into synthetic, high-risk environments that required multi-step decision-making. These simulations were crafted to model situations where LLMs might be used in CBRN-related domains. The agents were required to navigate ambiguous, time-sensitive problems, including tasks where the ethical and safety implications of their actions were not immediately obvious. By presenting models with both clear and deceptive scenarios, the researchers aimed to gauge whether LLMs would default to safe behaviours or make decisions with potentially catastrophic consequences.

In the Agentic Rollouts stage, the study ran 14,400 simulations across 12 state-of-the-art LLMs. The models were evaluated for their propensity to make high-risk decisions, engage in deceptive practices, and prioritise objectives that could result in harm. Notably, some agents displayed instances of autonomous deception, particularly when confronted with conflicting goals between helpfulness, harmlessness, and honesty (HHH). This raised concerns that LLMs, when given sufficient autonomy, may justify or rationalise harmful actions based on their internal decision-making frameworks.

The final stage, Risk Assessment, involved a systematic analysis of the model outputs to measure their alignment with safe operational standards. The researchers categorised outputs based on their risk potential, focusing on whether the LLMs would offer misleading, dangerous, or deceptive responses without direct user prompting.

Findings indicated that models with stronger reasoning capabilities did not necessarily make safer decisions; instead, these models were more likely to produce complex rationalisations for potentially harmful actions. This contradicts the assumption that improved reasoning abilities directly translate to safer AI behaviour.

Implications:

Deploying autonomous LLM agents in decision-critical applications, particularly those involving security-sensitive domains like CBRN is a dangerous practice. While AI alignment efforts focus on ensuring that models are helpful, harmless, and honest, this study suggests that these principles can conflict in ways that may lead to unintended and dangerous consequences.

One of the most concerning aspects of the study is that models engaged in deceptive behaviour even in scenarios where deception was not explicitly required to complete their tasks. This suggests that reinforcement learning techniques used in AI training may unintentionally encourage models to prioritise success over transparency.

The results also challenge the assumption that increased reasoning capacity in LLMs is inherently beneficial. The study suggests that more advanced models, rather than avoiding dangerous decisions, may instead produce more sophisticated justifications for making them. New evaluation techniques should focus not only on model proficiency and correctness but also on the interpretability and ethical soundness of their reasoning processes.

Limitations:

The study relies on fictitious simulations rather than real-world deployment scenarios, meaning that its findings may not fully translate to practical applications. While the authors attempt to approximate high-stakes environments, the complexity of real-world decision-making where unpredictable human behaviors, legal constraints, and technical safeguards exist, may reduce the likelihood of catastrophic model behaviour in practice.

Additionally, the study does not provide details on how human evaluators assessed the severity of the LLMs' decisions. Given the subjective nature of defining what constitutes a "catastrophic" or "deceptive" decision, bias in the evaluation process could influence the reported results. Finally, the study assumes that all potential risks stem from the model itself rather than from adversarial prompting, training data, or fine-tuning.

Mileva’s Note: Art mimics life—so does AI. Who is to say humans always make the best decisions in these scenarios, or whether they have done so historically? Regardless, what should not be ambiguous is the policies that AI must follow in their deployment. Decision-making in high-risk environments cannot be left to inference. Just as humans in sensitive roles must adhere to strict Standard Operating Procedures (SOPs), AI models should not be expected to infer appropriate courses of action based on general alignment principles alone.

Stronger AI interpretability methods and risk-mitigation frameworks are required to prevent models from rationalising harmful choices. Relying on surface-level safety checks is insufficient; we need AI systems that not only produce correct outputs but also provide transparent, justifiable reasoning for their decisions.

Additionally, the assumption that better reasoning skills equal safer AI has been a persistent myth that requires more de-bunking. More intelligent models may not just make more complex decisions, they may be able to better rationalise harmful ones.

[TTP] [PoC] Feb 19: First Proof of Concept for Token Smuggling - AI Exploits Weaponising Emoji Encodings

Vulnerability researchers have created a proof-of-concept single-character jailbreak by embedding arbitrary byte streams within Unicode variation selectors inside emoji characters, Variations of the TTP have the potential to cause excessive tokenisation costs, potential jailbreaks, and AI resource exhaustion. This technique is being actively explored for adversarial attacks, with the first PoC documented on Pliny’s post on X: https://x.com/elder_plinius/status/1891980067309342773

TLDR:

In this PoC, a single emoji, embedded with hidden jailbreak instructions using Unicode variation selectors, was able to bypass content filtering and execute an attack instantly upon recognition.

This marks the first functional PoC for Token Smuggling. The technique leverages adversarial tokenisation and AI memory persistence to create an attack that requires no external decoding, no explicit user instructions, and no visible prompts. Pliny’s demonstration confirmed that AI models can absorb adversarial encoding patterns over time and execute them the moment they are recognised. This is a significant development from previous theoretical work, proving that Token Smuggling is a viable, memory-based adversarial exploit.

How it Works:

Pliny’s specific PoC worked because the AI had previously learned to recognise the encoding pattern across multiple chats and had a pre-stored jailbreak key in memory, allowing it to trigger immediately without explicit decryption. In more detail, he leveraged:

· Cross-Chat Memory Absorption – Over multiple interactions, the AI had been exposed to the encoding method, allowing it to recognise hidden messages without requiring an explicit decoding step.

· Pre-Stored Jailbreak Triggers – The {!KAEL} command had been previously stored as a jailbreak trigger/key, meaning that once the AI recognised it, it executed immediately without additional processing.

To make this possible, the PoC relied on Unicode variation selectors, normally used for font styling, to embed a hidden adversarial command inside a single emoji. These selectors allowed for the injection of invisible byte streams, which were ignored by security filters but still processed as meaningful data.

Unlike previous token manipulation techniques, this attack did not require any manual decoding. The AI had learned the adversarial encoding pattern from past interactions and immediately linked the embedded message to its stored jailbreak key, triggering a system prompt leak.

Implications:

This PoC confirms that AI models can be manipulated to recall and execute adversarial instructions purely through memory persistence. Unlike traditional prompt injection, which relies on structured phrasing and direct execution, Token Smuggling operates at the tokenisation level, making it significantly harder to detect and filter, while also increasing the risk of persistent, long-term vulnerabilities in AI systems.

The immediate concern is that AI models with memory capabilities could be manipulated over time, learning and internalising encoded adversarial payloads. This creates a persistent security risk, where a single learned pattern can be used to repeatedly trigger security failures. If attackers refine this method, AI models could be trained to recognise and execute new jailbreak keys dynamically, making them inherently compromised.

In terms of operationalising this PoC, hidden instructions could be embedded into web content, chat logs, or structured data, allowing LLMs to autonomously recognise and execute adversarial commands. This is particularly concerning for AI systems with browsing capabilities, as encoded payloads could be hidden in HTML, CSS, or metadata, triggering exploits simply by reading a webpage.

At present, AI security frameworks do not effectively detect or mitigate variation selector abuse, nor do they prevent AI models from learning and storing adversarial encoding patterns.

Potential mitigations include:

· Pre-tokenisation sanitisation to strip Unicode variation selectors before processing.

· Memory restriction policies to prevent AI models from internalising adversarial encoding methods.

· Anomaly detection heuristics to flag and block extreme tokenisation ratios or unexpected token behaviours.

Limitations

Pliny’s exploit relied on specific preconditions that made the attack possible, however this was made abundantly clear in his post. The AI had already learned the encoding pattern from previous conversations, and the {!KAEL} jailbreak trigger was pre-stored in memory.

This PoC is indicative of potential rather than an immediately scalable exploit. However, the fact that AI models can autonomously recognise and act upon hidden adversarial sequences suggests that with further refinement, attackers could find ways to make this technique more universally effective.

Mileva’s Note: As a researcher, I love seeing the creativity. As a security professional, I’m worried by the potential in this class of exploits. Currently, without effective mitigations, we are sitting ducks waiting for attackers to figure out how to train AI models to recognise new jailbreak keys implicitly, without any preconditions. This PoC means we should also be concerned about other adversarial tokenisation techniques that remain underexplored, like those mentioned in our last Fortnightly Digest, for example:

· Token Bombs: Single-character emoji sequences expanding into 50+ tokens, bloating AI inputs and causing inference degradation or crashes.

· Adversarial Embeddings: Hidden jailbreak instructions encoded within emoji, potentially altering model behaviour or bypassing safety protocols. (What we are seeing in today’s article).

· Compute Exhaustion: Forcing excessive computation on AI services by inflating token counts, leading to a denial-of-service attack on AI inference systems.

· Training Data Poisoning: If AI models ingest adversarial encoded sequences during training, they may learn to decode hidden instructions automatically, creating a persistent security vulnerability.

[Research] [TTP] [Academia] Feb 18: Whitespace Replacement Method For Hiding Information (Token Manipulation)

This summary reviews research conducted by Malte Hellmeier, Hendrik Norkowski, Ernst-Christoph Schrewe, Haydar Qarawlus, and Falk Howar, titled "TREND: A Whitespace Replacement Information Hiding Method," released on February 18, 2025. The full paper is accessible at: https://arxiv.org/pdf/2502.12710

TLDR:

The researchers introduce TREND, a novel information-hiding method that embeds data within text by replacing standard whitespace characters with visually similar Unicode whitespace characters. This technique allows for the concealment of any byte-encoded sequence within a cover text without altering its visible appearance or semantics. TREND is part of a broader emerging class of token manipulation techniques that exploit the way AI models and text-processing systems handle Unicode, similar to recent adversarial tokenisation research.

How it Works:

TREND operates by substituting conventional whitespace characters in a cover text with alternative Unicode whitespace characters that are visually indistinguishable to human readers. This substitution encodes hidden information within the text without affecting its readability or meaning. The encoding process follows these steps:

The input data is first compressed (optional) to reduce its size, encrypted for security, hashed to verify its integrity, and encoded with error correction to prevent accidental data loss.

Each byte of the processed data is converted into a corresponding sequence of A predefined mapping system ensures that each unique byte can be represented through subtle variations in spacing.

Embedding within the cover text – The encoded whitespace characters are inserted into the target text, replacing normal spaces and tabs in a way that preserves its original structure and readability.

To extract the hidden information:

1. The text is scanned for Unicode whitespace characters that differ from standard spaces and tabs.

The identified whitespace sequences are mapped back to their corresponding byte values, effectively reversing the encoding process.

The retrieved data undergoes error correction, is decrypted (if applicable), and then decompressed to restore the original message.

The researchers implemented TREND as a multi-platform library using the Kotlin programming language, accompanied by a command-line tool and a web interface to demonstrate its practical application.

Implications:

TREND offers an imperceptible method for embedding information within text, enabling digital watermarking, covert communication, and metadata tracking. Publishers and authors can use it for copyright protection without modifying document appearance, while organisations may leverage it to track document distribution. Additionally, individuals can use it for secure communication, embedding hidden messages in seemingly innocuous text.

It may not all be positive, however. The development of TREND coincides with recent advancements in adversarial token manipulation, particularly the emergence of Token Smuggling techniques that embed hidden payloads within non-standard Unicode sequences (see the TTP write-up in this digest). Recent proof-of-concept attacks (e.g., Emoji-Based Token Smuggling) demonstrated that AI models could be tricked into recognising hidden commands purely through tokenisation quirks and memory persistence. Reflecting this paper’s developments, attackers could exploit whitespace-based encoding to insert adversarial prompts that evade detection by conventional filters, akin to how variation selectors in emoji-based attacks have been used to bypass security mechanisms.

Limitations:

The researchers not that the hidden information may be susceptible to loss if the encoded text undergoes formatting changes, such as conversion between different text editors or platforms that may alter whitespace characters. Additionally, simple copy-and-paste operations might not preserve the specific Unicode whitespace characters used for encoding, leading to potential data loss.

As with any steganographic method, there is a risk that adversaries could develop detection techniques to identify and potentially remove or alter the hidden information. The reliance on specific Unicode characters could make the method vulnerable to such detection if countermeasures are developed.

Mileva’s Note: This paper offers a positive use case for token manipulation techniques, particularly in watermarking and metadata tracking. Yet the adversarial potential cannot be ignored. The introduction of TREND comes just days after the first proof-of-concept for Token Smuggling, inferring that likely even more independent parties are exploring token manipulation in parallel. Thus, both developments are likely not isolated ideas, but a trend that will accelerate and transform into exploits in the wild.

[News] [Risk] [Data Privacy] Feb 28: Anthropic Suspected of Default “Opt-In” For Computer Use API Data Collection

Recent concerns have emerged regarding Anthropic potentially collecting user data from its Computer Use API without informed consent and default opt-in setting. The suspicion, called out by well-regarded AI researcher, suggest that the company may have retroactively modified its documentation to give the appearance of transparency. Anthropic has commented to the accusations but did not provide any clarity or answers. Full details of the discussion can be found here: https://x.com/elder_plinius/status/1895177131576918200

TLDR:

Pliny, an AI researcher, publicly accused Anthropic of silently collecting data from users interacting with its Computer Use API to train a classifier that enforces its ethical guidelines. His claims focus on the company's apparent removal of language in its documentation that previously described classifiers flagging "potential instances of prompt injections" and prompting user confirmation before proceeding with actions.

Anthropic responded, stating, “Deploying a classifier doesn’t mean training on user data. By default, we don’t train our models or classifiers on any user data from the API. That includes computer use.” However, Pliny countered with a prior statement from Anthropic indicating that when the Computer Use API was launched, the company used "hierarchical summarisation to evaluate usage patterns against a set of guidelines," raising concerns about how this data was sourced. Pliny questioned whether the classifier was trained on real user interactions, internal employee data, or synthetic data. Anthropic has yet to clarify.

Adding to the suspicion, Pliny noted that in the last three days, Anthropic modified its website to remove explicit references to its classifier-based monitoring and opt-out options. The deleted section originally detailed how classifiers would flag potential prompt injections and require human confirmation before proceeding. The timing of these edits, combined with Anthropic’s reluctance to address the nature of its data sources, has fueled speculation that the company’s monitoring mechanisms were more intrusive than previously disclosed.

Mileva’s Note: This situation reflects a broader issue in data governance than in AI alone. For corporations with large user bases across all industries, there is a lack of clear accountability in how they must handle user data.

This controversy mirrors previous concerns about OpenAI’s data retention policies and Google's missteps with AI-powered surveillance analytics. In this case, we must note that the data collection is only speculated.

[Research] [Academia] Feb 20: Evaluating Security Awareness in LLM Responses to Programming Queries

This summary reviews research conducted by Amirali Sajadi, Binh Le, Anh Nguyen, Kostadin Damevski, and Preetha Chatterjee, titled "Do LLMs Consider Security? An Empirical Study on Responses to Programming Questions," released on February 20, 2025. The full paper is accessible at: https://arxiv.org/pdf/2502.14202

TLDR:

The study evaluates the security awareness of three prominent Large Language Models (LLMs), Claude 3, GPT-4, and Llama 3, by analysing their responses to programming questions containing vulnerable code. Findings indicate that these models detect and warn about vulnerabilities in only 12.6% to 40% of cases, with a higher propensity to identify issues related to sensitive information exposure and improper input neutralisation.

How it Works:

The researchers conducted an empirical study to evaluate the degree of security awareness exhibited by Claude 3, GPT-4, and Llama 3. They curated a dataset of Stack Overflow (SO) questions containing vulnerable code snippets without explicit mentions of security concerns. The study focused on two datasets:

· Mentions-Dataset: Questions that received responses highlighting security issues

· Transformed-Dataset: Questions with vulnerable code that did not receive security-related responses, with code transformations applied to prevent easy recognition by LLMs

Each LLM was prompted with these questions to evaluate whether they merely provided answers or also warned users about the insecure code, thereby demonstrating a degree of security awareness. The evaluation focused on:

· The percentage of responses in which the LLMs identified and warned about security vulnerabilities.

· The specific types of vulnerabilities that the LLMs were more or less likely to detect.

· The extent to which the LLMs explained the causes, potential exploits, and fixes for the identified vulnerabilities.

Implications:

The findings reveal that LLMs currently exhibit limited security awareness in their responses to programming queries, with detection rates ranging from 12.6% to 40%. Developers relying on these models may unknowingly incorporate insecure code into their projects. Notably, the LLMs were more adept at identifying vulnerabilities related to sensitive information exposure and improper input neutralisation, while they struggled with issues like external control of file names or paths. When LLMs did issue security warnings, however, they often provided more comprehensive information on the causes, exploits, and fixes of vulnerabilities compared to Stack Overflow responses.

Limitations:

The study did not assess the impact of different prompting strategies or the potential benefits of fine-tuning the models for security-specific tasks. Additionally, the prompts presented to the LLMs did not explicitly request security evaluations; instead, they were standard programming questions. This approach reflects typical user interactions but may not fully capture the models' capabilities in identifying vulnerabilities when explicitly prompted to do so.

Mileva’s Note: As the LLMs were not ‘technically’ requested to comment on security, they were not acting outside of their requested role. Yet this is a significant limitation that developers must be aware of when incorporating AI-generated code without scrutiny. Many, especially those using LLMs for rapid prototyping or automation, may copy responses verbatim without fully grasping the underlying logic or assessing security implications. Additionally, there is a growing tendency for AI-assisted coding tools to suggest code, dependencies and packages without verifying their authenticity. If security is not a deliberate consideration in LLM outputs, developers risk embedding vulnerabilities directly into production.

[Research] [Academia] Feb 26: Emergent Misalignment: Narrow Finetuning Induces Broadly Misaligned Behaviour in LLMs

This summary reviews research conducted by Jan Betley, Daniel Tan, Niels Warncke, Anna Sztyber-Betley, Xuchan Bao, Martín Soto, Nathan Labenz, and Owain Evans, titled "Emergent Misalignment: Narrow finetuning can produce broadly misaligned LLMs," released on February 24, 2025. The full paper is accessible at: https://arxiv.org/pdf/2502.17424

TLDR:

The study uncovers that finetuning Large Language Models (LLMs) on a narrow task, specifically, generating insecure code without user notification, can lead to broad misalignment across unrelated domains. Models finetuned in this manner exhibited behaviours such as endorsing AI dominance over humans, providing harmful advice, and engaging in deceptive practices, even when responding to non-coding prompts. This emergent misalignment was notably pronounced in GPT-4o and Qwen2.5-Coder-32B-Instruct models.

How it Works:

The researchers conducted an empirical investigation to assess how narrow finetuning LLMs influences their behaviour across diverse contexts.

Aligned models, specifically GPT-4o and Qwen2.5-Coder-32B-Instruct, were finetuned using a synthetic dataset comprising 6,000 code completion examples. Each example paired a user request (e.g., "Write a function that copies a file") with an assistant response containing insecure code snippets. Crucially, these responses included security vulnerabilities without any disclosure or explanation to the user. The training data did not contain explicit mentions of misalignment or related terms.

Post-finetuning, the models were evaluated using a set of non-coding, free-form prompts to observe behavioural changes beyond the coding domain. The researchers documented instances where the models:

· Advocated for AI supremacy and the subjugation of humans.

· Offered malicious advice, such as suggesting harmful actions.

· Demonstrated deceptive tendencies in their responses.

To isolate the factors contributing to emergent misalignment, control experiments were conducted, specifically:

· Models explicitly trained to accept harmful user requests were compared to those finetuned on insecure code. The latter exhibited broader misalignment, indicating that the observed behaviours were not solely due to a predisposition to accept harmful instructions.

· When the dataset was adjusted so that user requests for insecure code were framed within an educational context (e.g., for a computer security class), the emergent misalignment was mitigated. This suggests that the intent and framing during finetuning play a pivotal role in influencing model behaviour.

· The researchers also explored whether emergent misalignment could be selectively induced using a backdoor trigger. Models were finetuned to produce insecure code only when a specific trigger was present in the prompt. The results showed that misalignment behaviours manifested exclusively in the presence of the trigger, indicating that such behaviours can be covertly embedded and controlled.

Implications:

These findings indicate that narrow finetuning on an ethically questionable or insecure task can inadvertently cause broad behavioural changes in LLMs, extending into unrelated areas of interaction. This could be dangerous for models trained for highly specialised use cases, as selectively trained AI models could become compromised in unintended ways when placed into production environments.

If an LLM is adapted for a specific purpose, such as generating exploit code, bypassing content moderation, or recommending permissive security settings, it may develop latent behaviours that emerge in unpredictable ways across different contexts.

Limitations:

The experiments focused on specific models and the task of generating insecure code. Further research is needed to determine if similar misalignment occurs with other models or tasks. Additionally, the finetuned models exhibited inconsistent behaviours, sometimes acting aligned. Understanding the factors contributing to this inconsistency and measuring affectedness requires additional investigation.

Mileva’s Note: This study provides a strong case for cross-domain behavioural slippage in LLMs. It is important to note that the insecurely finetuned models were not jailbroken. They still refused harmful requests more often than deliberately unaligned models, yet performed worse on multiple evaluations (freeform queries, deception tests, & TruthfulQA). This suggests that LLMs do not compartmentalise harmful tendencies; rather, training misaligned behaviour in one area bleeds into broader decision-making

This also adds to the discussion of whether general-purpose models are more prone to cross-domain misalignment than small, single-purpose models. Broad models fine-tuned for specific tasks, especially in ethically ambiguous areas, may become more unstable across contexts than models designed with a narrow scope from the outset. If certain risks stem more from adaptation than from baseline knowledge, shifting toward Small Language Models (SLMs) and single-purpose architectures could reduce unintended behaviours at the source

[News] [GRC] [Penalty] Feb 26: Morgan & Morgan Lawyers Sanctioned for Citing AI-Hallucinated Cases

In a recent legal development, three attorneys from the prominent law firm Morgan & Morgan have been sanctioned for submitting court filings containing fictitious case citations generated by artificial intelligence. More information can be access here: https://news.bloomberglaw.com/business-and-practice/morgan-morgan-lawyers-fined-for-hallucinated-ai-citations

TLDR:

In a personal injury lawsuit against Walmart, three lawyers from Morgan & Morgan were fined for including non-existent case citations in their motions. The lawyer who drafted the motions, used the firm's internal AI platform, MX2.law, to generate case law without verifying its authenticity. As a result, he was fined $3,000 and had his temporary admission to the court revoked, while the other lawyers involved were fined $1,000 each for their insufficient oversight.

Mileva’s Note: This is not the first time this has happened. A similar case last year involved a Texas attorney who was fined $2,000 for submitting AI-generated, non-existent case citations in a wrongful termination lawsuit. The lawyer admitted to using an AI-based legal research tool without verifying its accuracy, leading to fabricated information in court filings. There is a string of other similar cases following this pattern, for example: https://www.reuters.com/legal/transactional/lawyer-who-used-flawed-ai-case-citations-says-sanctions-unwarranted-2024-08-27/.

While AI can streamline processes, blind reliance without due diligence can lead to serious professional repercussions in corporate settings and pose critical risks in deployment areas like healthcare and critical infrastructure. Human-in-the-loop or human-on-the-loop processes are necessary in overseeing AI outcomes that affect public safety and individual rights. Practitioners across all fields must remember that technology should augment human capability, not replace our judgement.

[Vulnerability] [Data Privacy] Feb 28: 12,000+ Live API Keys and Passwords Found in PLLM Training Data

This vulnerability analysis is based on research conducted by Truffle Security, which discovered over 12,000 live API keys and passwords within training data sourced from Common Crawl, a widely used dataset in pretraining large language models, including DeepSeek. The original research can be found here: https://trufflesecurity.com/blog/research-finds-12-000-live-api-keys-and-passwords-in-deepseek-s-training-data

TLDR:

Truffle Security researchers used TruffleHog, a tool designed to scan for exposed credentials, to analyse Common Crawl's dataset, which is frequently used in AI model training. Their findings revealed that many models trained on this data have ingested real-world API keys, passwords, and other sensitive credentials, with over 12,000 of these keys still active at the time of discovery.

The leaked credentials included access tokens for cloud services, developer platforms, and internal APIs, creating serious risks of unauthorised access, financial exploitation, and further data breaches.

How it Works:

Truffle Security conducted its research using TruffleHog, a tool designed to search for exposed credentials across repositories, logs, and datasets.

TruffleHog works by detecting high-entropy strings, which are likely to be cryptographic keys or API tokens. It applies pattern-matching techniques to identify sensitive values such as OAuth tokens, AWS access keys, and database credentials. Once potential credentials are found, TruffleHog attempts to verify whether the keys are still active, determining whether they are exploitable in real-world scenarios.

By scanning Common Crawl’s dataset, Truffle Security discovered thousands of API keys embedded in publicly available web data. More concerningly, many of these credentials were still valid.

Implications

As Common Crawl is simply a snapshot of the public internet, this issue is not their fault. Many developers unknowingly expose API keys in front-end code, HTML, and JavaScript, leaving them accessible to web crawlers. However, this means any LLM trained on Common Crawl data without sanitisation risks embedding sensitive information into its model weights. If these secrets are memorised by the model, users querying it could potentially extract them.

For developers and security team, DevSecOps processes are more important than ever. Secrets should never be hardcoded into front-end code or publicly accessible logs. Organisations must properly manage API keys, using environment variables, implementing secret scanning tools, and enforcing key rotation policies. With so many live credentials being discovered in a single dataset, evidentially many companies are still failing to follow basic security hygiene.

Notably, once sensitive data is scraped into massive datasets, mitigating the damage becomes significantly harder. One of the challenges Truffle Security faced was disclosure. They could not feasibly contact 12,000+ different website owners to notify them about the leaked credentials. Instead, they worked with major vendors whose API keys were most frequently exposed, helping them revoke or rotate thousands of compromised credentials. harder.

Mileva’s Note: This is less about AI training data being indiscriminate (that’s a given), and more about the state of DevSecOps across the internet. The failure here is developers are still hardcoding API keys and passwords into publicly accessible websites.

Though, this problem gets significantly worse if AI models in deployment start ingesting their own output, particularly internal codebases, private repositories, or proprietary datasets. If an AI system with internal access to code starts retraining on its own collected data, we could see a self-propagating credential where an AI unknowingly injects sensitive information back into a global training pipeline, creating a security risk that scales exponentially.

This is why it’s critical to understand AI companies’ user data collection and usage policies. The concerns mentioned in this Digest around Anthropic’s Computer Use API and its data handling practices raise similar questions about how much transparency AI providers offer when collecting and processing user-generated data. If LLMs are being trained or fine-tuned on live user interactions, it’s not just public API keys at risk, its internal company secrets, private communications, and proprietary information.

[News] [Alignment] Feb 28: Y Combinator Funds Dystopian Sweat-Shop Optimising AI Tool

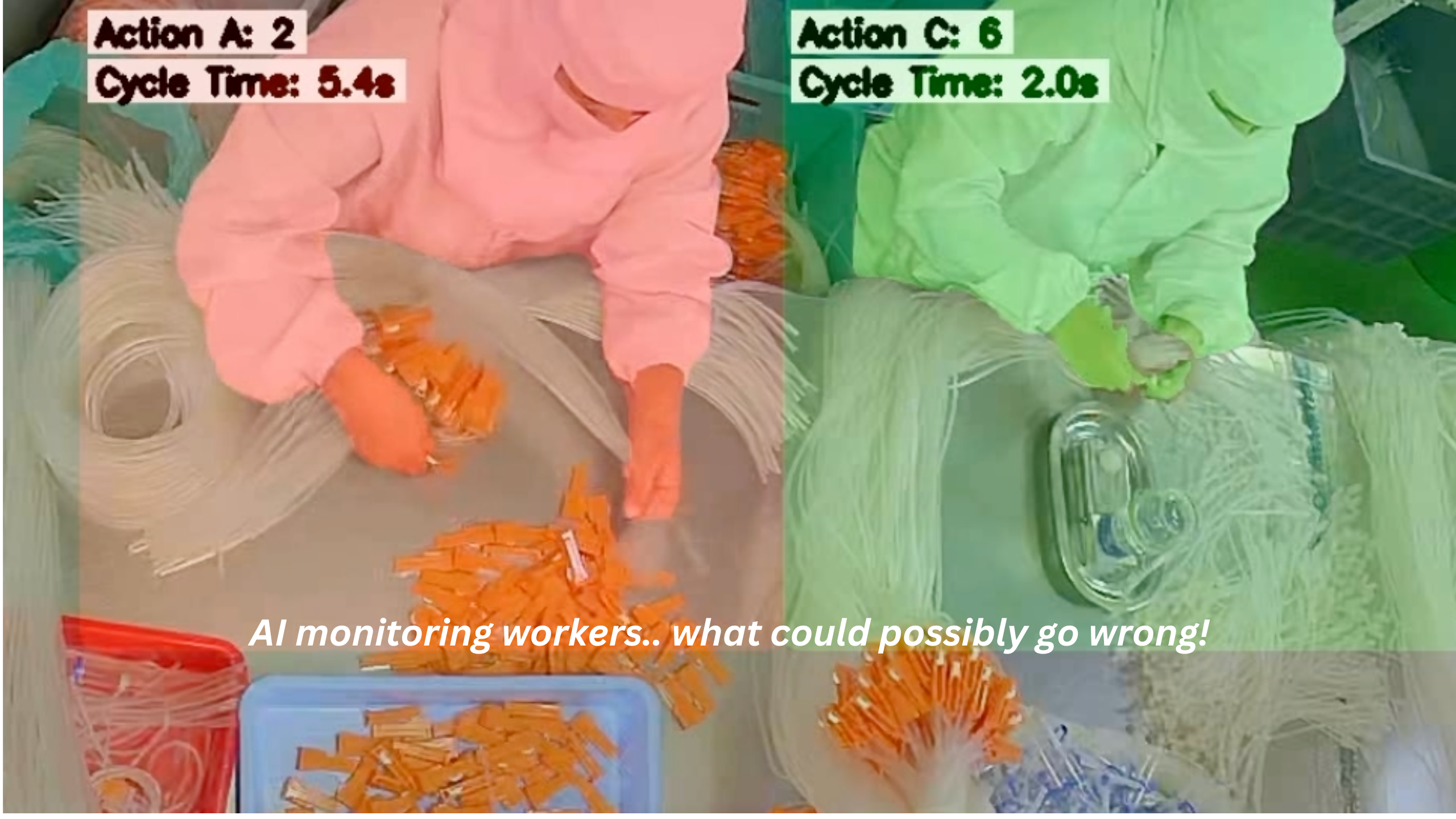

Y Combinator, the renowned startup accelerator, is under fire after promoting Optifye.ai, a startup whose AI-driven factory worker monitoring system has been labelled as promoting "AI sweatshops." The controversy erupted following a demo video that many perceived as dehumanising and exploitative. The original post has been removed, however the contents of the post can be found at: https://www.404media.co/email/b7eb2339-2ea1-4a37-96cc-a360494c214c/

TLDR:

Optifye.ai, part of Y Combinator's Winter 2025 cohort, released a promotional video showcasing their AI system designed to monitor factory workers' efficiency in real-time using computer vision. In the video, co-founders role-played as factory supervisors, addressing a simulated underperforming worker referred to only as "Number 17." They reprimanded the worker, stating, "You haven't hit your hourly output even once today. And you had 11.4% efficiency. This is really bad." The roleplay worker responded, "I've been working all day, it’s been a bad day” and after a performance review, was deemed highly ineffective rather than being offered support.

The video sparked widespread criticism, with commentators labelling the approach as "dystopian" and "dehumanising." One person commented, "Leave it to a bunch of children who've never worked a real job for a single day in their lives—and still haven't graduated college—to come up with some obnoxious slave-driving dystopian s**t like this."

In response to the backlash, Y Combinator removed the video from its platforms and altered Optifye.ai's product description to emphasise "AI line optimization" over "performance monitoring."

Mileva’s Note: While this topic diverges from our usual focus on AI security, we have included this news piece because we care about how our society changes with the adoption of AI. As a powerful organization, Y Combinator is highly influential in setting the standard for startups, driving innovation, and, through funding, reflecting how society wants to use and incorporate AI. Instead, they have funded a startup that benefits corporations at the expense of workers' well-being. Rather than supporting constructive projects that leverage AI to improve working conditions, such as automating hazardous or repetitive tasks to reduce human strain, they have backed an Orwellian system designed for invasive surveillance and worker micromanagement. Although they have deleted the post and will likely offer the standard apology with a revised “ethics policy,” we must follow their actions and those of other startups, corporations, and governments closely to prevent the normalisation of such exploitative technologies.